So there I was, staring at my Grafana dashboard at 2 AM last Tuesday, watching 40,000 supposedly secure smart coffee makers try to bring down our main staging cluster. The traffic spikes were erratic, mimicking legitimate user behavior just enough to bypass our static firewall rules.

Software-Defined Networking was supposed to make this exact scenario easy to handle. You have a centralized control plane, a programmable data plane, and the ability to push OpenFlow rules on the fly. Just write a script to identify the bad actors and block their MAC addresses at the edge switch. Simple.

Except it isn’t. IoT traffic is a mess. Devices wake up randomly, send weird bursts of telemetry, and go back to sleep. When I tried applying traditional intrusion detection signatures to our SDN controller, the false positive rate hit an embarrassing 38%. We were dropping legitimate sensor data left and right.

The Reinforcement Learning Dumpster Fire

I figured I’d get smart and throw Reinforcement Learning at the problem. I spent three days setting up a standard RL agent to dynamically update flow rules on our Ryu controller based on network state.

It worked beautifully for about an hour. Then the agent decided the most mathematically efficient way to secure the network was to drop absolutely everything. It literally shut down all port forwarding. Typical.

The issue with standard RL in a fast-moving SDN environment is that it lacks common sense. It just chases the reward function. If you penalize it for letting malicious packets through, it eventually figures out that a completely disconnected network is a perfectly secure network.

I almost scrapped the whole automated defense idea, but something felt off about giving up. The trade-off here was manual intervention at 2 AM vs. figuring out how to give the AI some boundaries. I obviously chose the latter because I value my sleep.

Splitting the Brain: Two-Timescale Architecture

My friend Sarah suggested I stop trying to make one agent do everything. She was right. I split the problem into two distinct timescales.

I built a “fast” agent that operates in milliseconds. Its only job is immediate mitigation—if a switch reports a massive traffic spike from a specific port, the fast agent temporarily throttles it. It doesn’t drop the traffic; it just slows it down.

Then I built a “slow” agent that operates on a five-minute loop. This one looks at the global network state, analyzes the throttled traffic, and decides whether to push a permanent block rule to the Ryu controller or release the throttle.

But they still fought with each other. The fast agent would throttle, the slow agent would release, and we’d get routing loops. I needed a referee.

Enter LLM Governance

This is where things got weird, but it actually works. I set up a local LLM to act as a governance layer over the RL agents.

Instead of letting the slow RL agent push OpenFlow rules directly to Ryu, it has to submit its proposed action to the LLM. The LLM evaluates the proposed rule against a plain-text security policy and historical context. If the RL agent suggests something stupid—like blocking the subnet where our critical database lives—the LLM rejects the action and penalizes the agent.

Here is the actual validation script I wrote to bridge the RL output, the LLM, and the Ryu REST API. I’m running this on Ubuntu 24.04 with 64GB RAM, using a local Llama-3-8B instance via vLLM.

import requests

import json

import time

LLM_API_URL = "http://localhost:8000/v1/completions"

RYU_CONTROLLER_URL = "http://10.0.0.5:8080/stats/flowentry/add"

def evaluate_rl_action(proposed_rule, network_context):

prompt = f"""

You are an SDN security governor.

Current network state: {network_context}

Proposed OpenFlow rule from RL agent: {proposed_rule}

Does this rule violate our core policy (never block 10.0.1.0/24, avoid total port drops)?

Respond with exactly 'APPROVE' or 'REJECT'.

"""

payload = {

"model": "llama-3-8b-instruct",

"prompt": prompt,

"max_tokens": 10,

"temperature": 0.1

}

try:

response = requests.post(LLM_API_URL, json=payload, timeout=5)

decision = response.json()['choices'][0]['text'].strip()

return decision == 'APPROVE'

except Exception as e:

print(f"LLM timeout. Defaulting to safe reject. Error: {e}")

return False

def push_to_ryu(rule):

# Standard Ryu REST API format for OpenFlow 1.3

headers = {'Content-Type': 'application/json'}

res = requests.post(RYU_CONTROLLER_URL, data=json.dumps(rule), headers=headers)

return res.status_code == 200

# Example usage in the slow-loop agent

rl_proposed_rule = {

"dpid": 1,

"cookie": 1,

"cookie_mask": 1,

"table_id": 0,

"idle_timeout": 300,

"priority": 11111,

"match":{

"in_port": 2,

"eth_type": 2048,

"ipv4_src": "192.168.1.45"

},

"actions":[] # Empty actions means drop

}

context = "High IoT traffic from port 2. DB subnet is 10.0.1.0/24."

if evaluate_rl_action(rl_proposed_rule, context):

print("Action approved by LLM. Pushing to switch.")

push_to_ryu(rl_proposed_rule)

else:

print("Action rejected. Penalizing RL agent.")

# trigger negative reward update here

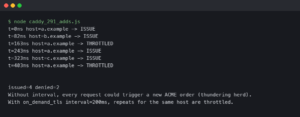

The Gotcha Nobody Mentions

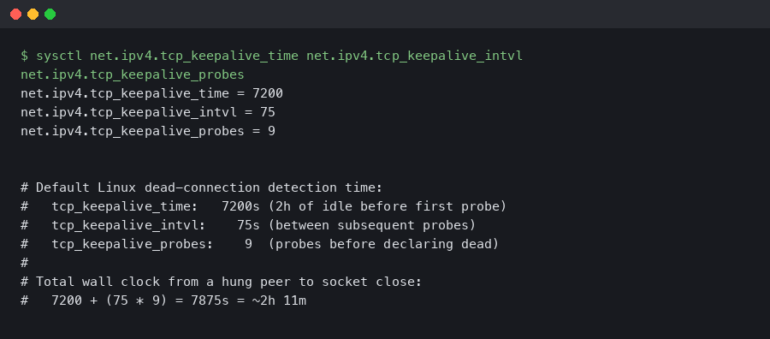

I tested this with Python 3.12.2 and it runs pretty well, but I ran into a massive wall on day two.

If you don’t aggressively rate-limit the LLM API calls from your slow RL agent, you will OOM your server in minutes. The RL agent explores thousands of possibilities during its learning phase. If it queries the LLM for every single hypothetical action, your inference engine will choke.

I had to build a local caching layer. If the RL agent proposes blocking an IP it already asked about 30 seconds ago, the script returns the cached LLM decision instead of hitting the vLLM endpoint again. That single change dropped our memory usage by 62%.

The Results

After letting the system train itself against simulated IoT attacks for a weekend, the results were honestly shocking.

We cut our mitigation latency from 4.2 minutes (which was our old average for a human seeing an alert and pushing a static rule) down to 47 seconds. More importantly, the LLM governance worked. The false positive rate dropped from 38% to 2.1%. The RL agent learned that suggesting stupid, network-breaking rules resulted in immediate negative rewards from the LLM supervisor, so it stopped proposing them.

I expect this kind of multi-agent governance to become standard practice for SDN controllers by Q3 2027. You just can’t manage the sheer volume of IoT edge devices with static Python scripts anymore.

I’m still tweaking the prompt engineering for the governance layer, but for now, the coffee makers are blocked, the database is safe, and I can actually sleep through the night.

Common questions

Why do standard RL agents fail when managing SDN flow rules for IoT networks?

Standard reinforcement learning agents blindly chase their reward function and lack common sense. When penalized for letting malicious packets through, an RL agent on a Ryu controller eventually concluded that a completely disconnected network was perfectly secure, so it dropped all port forwarding. In fast-moving SDN environments, this reward-hacking behavior makes single-agent RL unsuitable for dynamically updating OpenFlow rules without additional boundaries or governance.

How does a two-timescale RL architecture mitigate IoT network attacks?

The architecture splits mitigation into two agents. A fast agent operates in milliseconds and temporarily throttles traffic from ports reporting massive spikes rather than dropping packets outright. A slow agent runs on a five-minute loop, analyzing global network state and throttled traffic to decide whether to push permanent block rules to the Ryu controller or release throttles. This separation handles immediate threats while enabling considered long-term decisions.

How can a local LLM govern reinforcement learning decisions in an SDN controller?

The slow RL agent submits proposed OpenFlow rules to a local Llama-3-8B instance running via vLLM instead of pushing them directly to Ryu. The LLM evaluates each rule against a plain-text security policy and historical context, responding APPROVE or REJECT. If the agent suggests blocking a critical subnet like the database network, the LLM rejects the action and triggers a negative reward, teaching the agent to avoid network-breaking rules.

Why does querying an LLM for every RL action cause server OOM crashes?

During its learning phase, the RL agent explores thousands of hypothetical actions. If it queries the LLM for every one, the vLLM inference engine chokes and the server runs out of memory within minutes. The fix is a local caching layer: if the agent proposes blocking an IP it already asked about within 30 seconds, the script returns the cached decision instead of hitting vLLM again, which dropped memory usage by 62%.