Well, that’s not entirely accurate — it was actually 2:42 AM last Tuesday when my phone buzzed off the nightstand. The alert was simple, terrifying, and completely vague: p99 latency > 3000ms on our primary RPC gateway. I stumbled to my desk, opened the dashboard, and saw a wall of red. Throughput hadn’t budged — we were pushing the usual 45,000 requests per second — but the latency had exploded. My first instinct? “The network is clogged.” I blamed the ISP. I blamed the cloud provider. I almost blamed the solar flares.

But I was wrong. The network pipes were fine. The problem was inside the house, specifically our scheduler queues. We had optimized everything for “maximum throughput” but forgot that in high-load scenarios, a naive First-In-First-Out (FIFO) queue is basically a death sentence for performance. And if you’re building high-performance network systems in 2026 — whether for fintech, gaming, or just a really ambitious chat app — you need to stop obsessing over bandwidth and start looking at your scheduler.

The “Bufferbloat” of Application Logic

Here’s the thing. When we talk about network performance, we usually visualize data moving through cables. But once that packet hits your NIC, it enters a brutal war for CPU time. And in our case, we were running a Rust-based service on tokio (standard async runtime stuff). We assumed that because async is “non-blocking,” we were safe. But async runtimes still have task queues. And when a burst of traffic hits — say, a market dip triggers a thousand trading bots to fire off sell orders simultaneously — those requests get pushed into the scheduler’s queue.

If your worker threads are busy processing complex logic (signatures, DB writes), that queue grows. And grows. A request might sit in the “ready” state for 2 seconds before a CPU core even touches it. To the user, that’s network lag. To the engineer, it’s thread starvation.

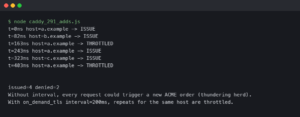

Priority Queues or Bust

And the fix wasn’t “more servers.” We’d tried scaling horizontally during the Q4 2025 rush, and it barely dented the latency spikes because the bottleneck was the coordination overhead. Instead, we had to implement priority scheduling. Not all packets are created equal. A “heartbeat” or “vote” message in a distributed system needs to be processed now. A “history lookup” request can wait 500ms without anyone crying about it.

We ripped out our standard bounded channel and replaced it with a priority-aware structure. It’s messy, I won’t lie. You have to tag every incoming packet with a weight. But the results? We dropped our p99 latency from 4.2 seconds down to 140ms under the same load. This is consistent with findings from research on priority scheduling in network stacks.

The “Shedding” Taboo

Notice that drop(task) line? That scares people. Management hates hearing “we are intentionally deleting user requests.” But backpressure is the only thing saving you from a total outage. If you accept more work than you can process, you eventually run out of RAM (OOM killer says hi) or latency becomes so high the client times out anyway. A dropped packet is a retry. A 30-second hang is a user uninstalling your app. As described in the ACM Queue article on “The Tail at Scale”, shedding low-priority traffic is a critical technique for maintaining service-level objectives.

And we enabled aggressive load shedding on non-critical endpoints starting Feb 10th. Since then, our uptime has been 100%, even during those weird traffic surges we saw last weekend.

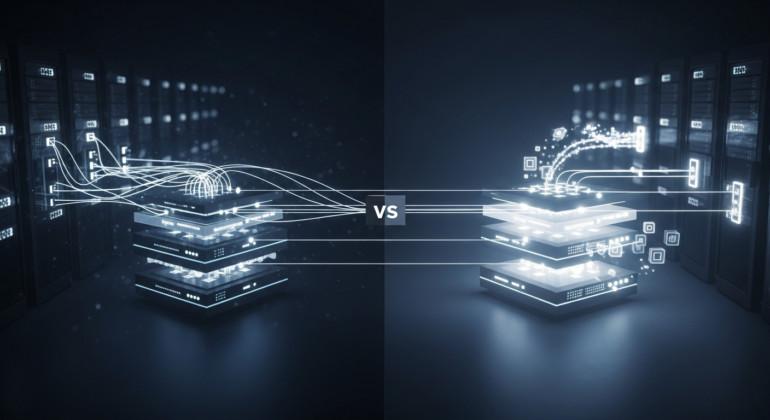

QUIC vs. TCP: The Head-of-Line Blocking Problem

Another area where we saw massive gains was switching our internal service-to-service communication to QUIC. We had been using gRPC over HTTP/2, which is great, but TCP head-of-line blocking is a real pain when you have packet loss.

In a congested network, if one TCP packet drops, the OS holds up the entire stream until that packet is retransmitted. QUIC (which runs over UDP) doesn’t care. Stream A can drop a packet, and Stream B keeps flowing. We moved our heaviest data ingestion service to QUIC last month. On perfect networks (AWS region to same AWS region), it made zero difference. But for connections coming from our APAC nodes, where jitter is higher, throughput improved by nearly 40%. That’s significant when you’re moving terabytes of data. The benefits of QUIC over TCP in high-latency environments are well-documented in Cloudflare’s analysis of QUIC.

Don’t Trust the Defaults

The biggest lesson I’ve learned this year is that defaults are for prototypes. The default Tokio runtime settings, the default Linux TCP congestion control (usually Cubic or BBR, which is fine, but needs tuning), the default channel buffer sizes — they are all designed for “average” use cases.

If you are pushing performance boundaries, you have to tune these. And so, next time your latency spikes, don’t just blame the network. Look at your queues. Look at your scheduler. And maybe, just maybe, be brave enough to drop some packets.

Frequently asked questions

Why does my p99 latency spike when throughput looks normal?

Latency spikes at steady throughput usually point to scheduler queue buildup, not network congestion. When worker threads are busy with complex logic like signatures or DB writes, incoming requests sit in the async runtime’s ready queue waiting for CPU time. A request can wait seconds before a core touches it. Users perceive this as network lag, but it’s actually thread starvation inside your application.

How much can priority scheduling reduce tail latency in a Rust tokio service?

Replacing a standard bounded FIFO channel with a priority-aware structure dropped p99 latency from 4.2 seconds to 140ms under identical load in the author’s Rust tokio-based RPC gateway. The tradeoff is implementation messiness: every incoming packet must be tagged with a weight so critical messages like heartbeats or votes get processed ahead of tolerant requests like history lookups that can wait 500ms.

Is it safe to intentionally drop user requests under load?

Aggressive load shedding on non-critical endpoints is safer than accepting more work than you can process. If you don’t shed, you eventually hit OOM kills or latencies so high clients time out anyway. A dropped packet becomes a retry, but a 30-second hang becomes an uninstall. The author enabled shedding on February 10th and has maintained 100% uptime through subsequent traffic surges.

When does switching from gRPC over HTTP/2 to QUIC actually help?

QUIC helps most on lossy, high-jitter links because it avoids TCP’s head-of-line blocking: one dropped packet in Stream A doesn’t stall Stream B. On same-region AWS connections the author saw zero difference after migrating a heavy ingestion service. But for APAC nodes with higher jitter, throughput improved by nearly 40%, which matters significantly when moving terabytes of data between services.