I spent three hours yesterday staring at an Azure routing table. Just staring. Two microservices in different virtual networks absolutely refused to talk to each other over a peering connection, dropping packets into a black hole. The culprit? An overlapping CIDR block buried four layers deep in a Terraform module that someone copy-pasted last November.

Networking in DevOps is usually an afterthought. People care about pipelines. They care about container registries. IP math? Not so much. You just grab 10.0.0.0/16, throw it in your variables file, and assume you’ll never run out of space.

And then you start spinning up ephemeral environments for every pull request.

The Hardcoding Trap

When you build your first few infrastructure-as-code pipelines, it is incredibly tempting to hardcode your address spaces. You define your main VNet, carve out a few /24 subnets for web, app, and database tiers, and call it a day. It works perfectly for production. It works fine for staging.

But when you scale out your CI/CD to provision dynamic infrastructure on demand, hardcoded strings break everything. If PR #405 and PR #406 both deploy at the same time using the same default variables, you get an immediate deployment collision. Azure throws a conflict error, your pipeline turns red, and developers start complaining in Slack.

You have to — probably — calculate subnets dynamically.

Doing the Math in Terraform

Terraform has built-in functions for this. I rely heavily on cidrsubnet. It takes a base prefix and slices it up for you based on the bits you want to add.

locals {

# The base network assigned to this specific environment

base_cidr = var.environment_address_space

# Add 8 bits to the mask (e.g., /16 becomes /24)

# The third argument is the network number

aks_subnet = cidrsubnet(local.base_cidr, 8, 1)

db_subnet = cidrsubnet(local.base_cidr, 8, 2)

app_subnet = cidrsubnet(local.base_cidr, 8, 3)

}

resource "azurerm_virtual_network" "main" {

name = "vnet-${var.env_name}"

location = azurerm_resource_group.rg.location

resource_group_name = azurerm_resource_group.rg.name

address_space = [local.base_cidr]

}

resource "azurerm_subnet" "aks" {

name = "snet-aks"

resource_group_name = azurerm_resource_group.rg.name

virtual_network_name = azurerm_virtual_network.main.name

address_prefixes = [local.aks_subnet]

}This looks clean. You pass a base CIDR like 10.5.0.0/16 into the module, and it automatically assigns 10.5.1.0/24 to the AKS cluster and 10.5.2.0/24 to the database. No manual math required.

The Gotcha

Here is where things get dangerous. The cidrsubnet function relies entirely on that third argument—the network number index.

But if you come back three months later and decide you need a dedicated subnet for a Redis cache, and you insert it alphabetically into your locals block at index 2, you just shifted the database to index 3 and the app to index 4. Terraform evaluates this as a configuration change. It will try to destroy your existing subnets and recreate them with the new IP ranges.

If you have resources running in those subnets, Azure will block the deletion with a SubnetIsInUse error. The pipeline fails. But if the subnet happens to be empty or contains disposable resources, Terraform just deletes it. I — well, that’s not entirely accurate — I learned this the hard way on a staging cluster running the AzureRM provider v4.2.0. We wiped out the ingress routing for half the engineering team because I reordered a list.

Never change the index numbers of existing subnets. Append new ones to the end. Always.

Moving to an IPAM

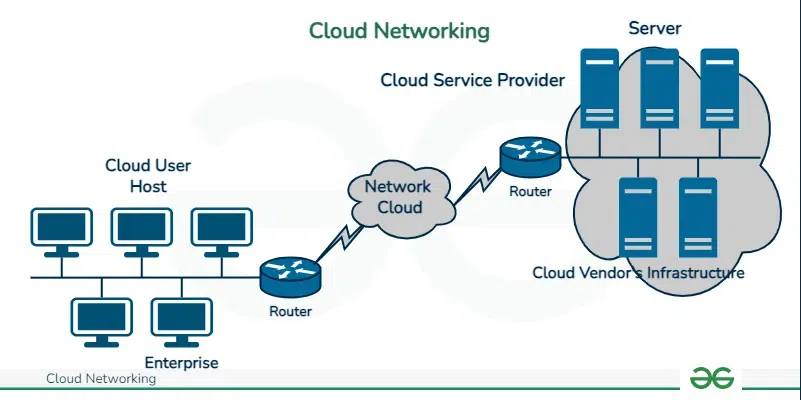

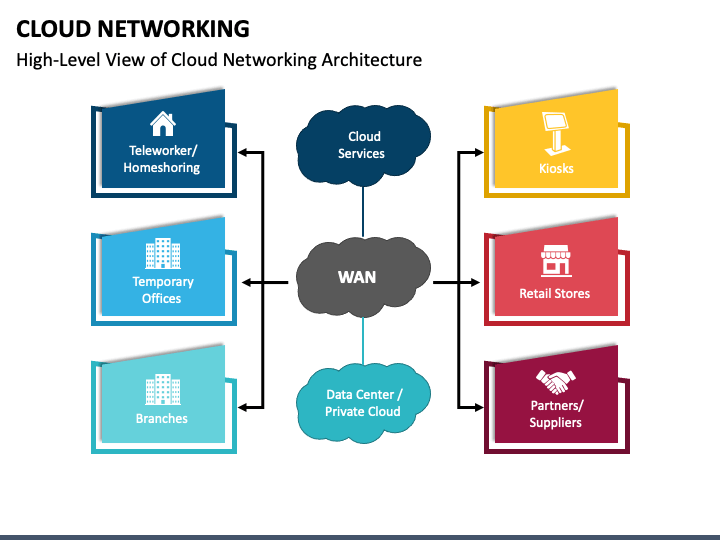

Eventually, even dynamic math inside your IaC isn’t enough. When you have dozens of subscriptions, peered networks, and VPN gateways connecting back to on-premise hardware, calculating IPs locally guarantees an eventual overlap.

And stop using local math for large-scale environments. Ask an authority.

We recently moved to querying NetBox 3.7.2 directly in our Terraform runs via its API. Instead of passing a base CIDR to the module, the pipeline asks NetBox for the next available /24 block in the staging aggregate. NetBox hands back a string. We pass that to the Azure VNet resource.

State drift vanished almost entirely after we made this switch. We cut our network-related deployment failure rate from around 14% to practically zero. The infrastructure code doesn’t know or care what the IP address is. It just requests space, consumes it, and releases it when the ephemeral environment is destroyed.

If you’re still tracking your cloud network spaces in a spreadsheet, stop. Set up a basic IPAM, hook your pipelines into it, and let the machines handle the CIDR math. Humans are terrible at it anyway.